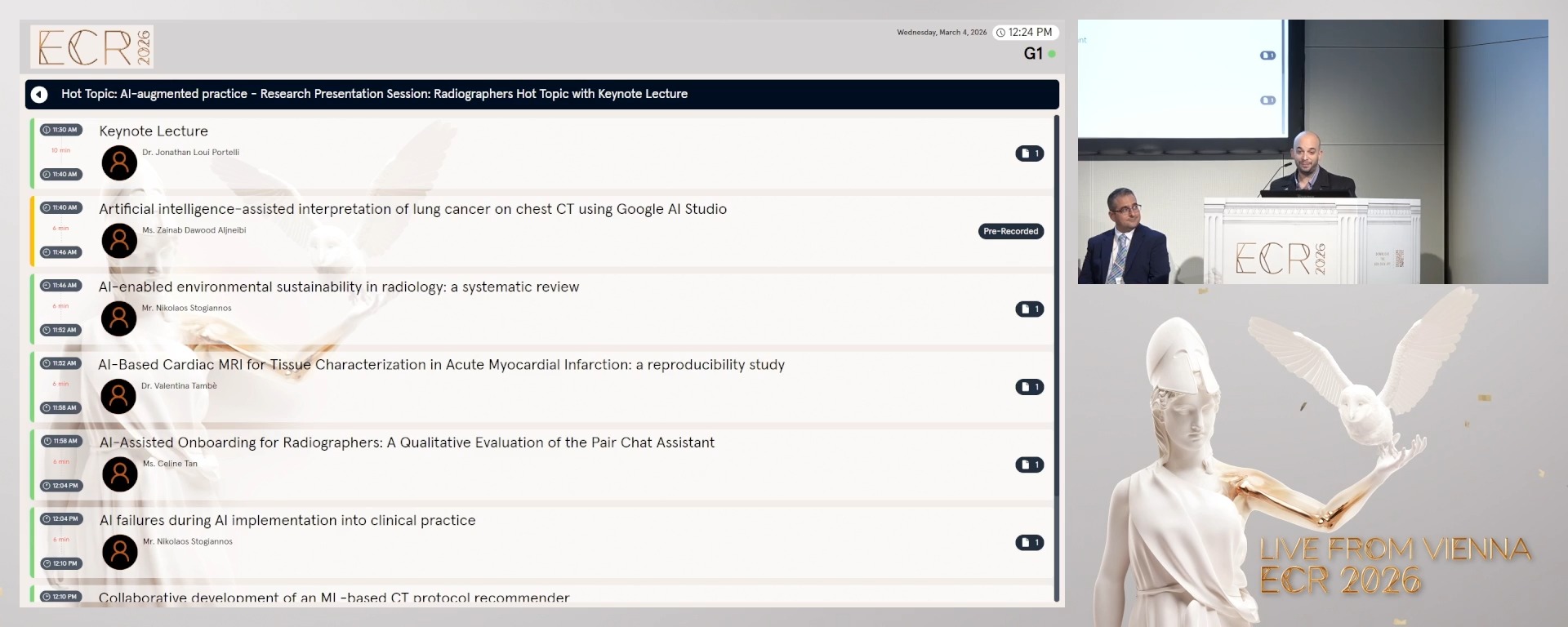

Research Presentation Session: Radiographers Hot Topic with Keynote Lecture

RPS 314 - Hot Topic: AI-augmented practice

10 min

Keynote Lecture

Jonathan Loui Portelli, Msida / Malta

6 min

Artificial intelligence-assisted interpretation of lung cancer on chest CT using Google AI Studio

Zainab Dawood Aljneibi, Abudhabi / United Arab Emirates

Author Block: Z. D. Aljneibi, S. Almenhali, L. J. O. C. L. Lança; Abudhabi/AE

Purpose: This study explored the diagnostic performance and interpretative behaviour of an artificial intelligence (AI)-enhanced model for detecting lung cancer on chest computed tomography (CT). Particular focus was given to the qualitative consistency of the AI-generated reports.

Methods or Background: An exploratory analysis was conducted using the publicly available IQ-OTH/NCCD dataset, comprising 110 CT cases (55 normal, 15 benign, 40 malignant). A pre-trained convolutional neural network in Google AI Studio was fine-tuned on a subset of images and applied to independent test cases. Quantitative outcomes included diagnostic accuracy, sensitivity, specificity, and area under the receiver operating characteristic curve (AUC). Qualitative analysis evaluated report structure, terminology use, and error patterns.

Results or Findings: The AI model achieved an overall accuracy of 75.5% (sensitivity 74.5%, specificity 76.4%). Malignant cases were identified with high discriminative performance (AUC = 0.902; 95 % CI: 0.830–0.964), while benign cases proved more difficult to classify (AUC = 0.615; 95 % CI: 0.477–0.754). Qualitative analysis showed consistent use of radiological terminology, with structured descriptions resembling human reporting. In normal cases, terms such as “clear lung fields” confirmed accurate recognition, though occasional references to “ground-glass opacities” reflected oversensitivity to minor changes. Benign cases were described with bilateral opacities and fibrotic changes, but overlapping terminology sometimes led to misclassification. Malignant cases were strongly aligned with features such as “mass” and “lesion.” These interpretative tendencies highlight effective feature recognition but limited specificity in non-malignant contexts.

Conclusion: Google AI Studio showed potential in detecting malignant disease and produced structured reports with consistent terminology. However, its interpretative oversensitivity and limited specificity highlight the need for human oversight and further refinement to reduce misclassification in benign and normal presentations.

Limitations: This study's retrospective design and reliance on a public dataset may limit generalizability.

Funding for this study: Not applicable

Has your study been approved by an ethics committee? Not applicable

Ethics committee - additional information:

Purpose: This study explored the diagnostic performance and interpretative behaviour of an artificial intelligence (AI)-enhanced model for detecting lung cancer on chest computed tomography (CT). Particular focus was given to the qualitative consistency of the AI-generated reports.

Methods or Background: An exploratory analysis was conducted using the publicly available IQ-OTH/NCCD dataset, comprising 110 CT cases (55 normal, 15 benign, 40 malignant). A pre-trained convolutional neural network in Google AI Studio was fine-tuned on a subset of images and applied to independent test cases. Quantitative outcomes included diagnostic accuracy, sensitivity, specificity, and area under the receiver operating characteristic curve (AUC). Qualitative analysis evaluated report structure, terminology use, and error patterns.

Results or Findings: The AI model achieved an overall accuracy of 75.5% (sensitivity 74.5%, specificity 76.4%). Malignant cases were identified with high discriminative performance (AUC = 0.902; 95 % CI: 0.830–0.964), while benign cases proved more difficult to classify (AUC = 0.615; 95 % CI: 0.477–0.754). Qualitative analysis showed consistent use of radiological terminology, with structured descriptions resembling human reporting. In normal cases, terms such as “clear lung fields” confirmed accurate recognition, though occasional references to “ground-glass opacities” reflected oversensitivity to minor changes. Benign cases were described with bilateral opacities and fibrotic changes, but overlapping terminology sometimes led to misclassification. Malignant cases were strongly aligned with features such as “mass” and “lesion.” These interpretative tendencies highlight effective feature recognition but limited specificity in non-malignant contexts.

Conclusion: Google AI Studio showed potential in detecting malignant disease and produced structured reports with consistent terminology. However, its interpretative oversensitivity and limited specificity highlight the need for human oversight and further refinement to reduce misclassification in benign and normal presentations.

Limitations: This study's retrospective design and reliance on a public dataset may limit generalizability.

Funding for this study: Not applicable

Has your study been approved by an ethics committee? Not applicable

Ethics committee - additional information:

6 min

AI-enabled environmental sustainability in radiology: a systematic review

Nikolaos Stogiannos, Corfu / Greece

Author Block: N. Stogiannos1, K. Konstantinidis2, S. Kalari3, S. C. Shelmerdine1, S. Ursprung4, T. Küstner4, K. Nikolaou4, A. G. Rockall5, C. Malamateniou1; 1London/UK, 2Kifisia/GR, 3Athens/GR, 4Tübingen/DE, 5Godalming/UK

Purpose: To explore the potential role of AI-powered applications in enhancing environmental sustainability in radiology.

Methods or Background: Searches were performed across eight different electronic bibliographic databases: Google Scholar, PubMed, Wiley Online library, Embase, Web of Science platform, the Cochrane library, CINAHL Ultimate, and the MedRxiv database for preprint work, for the publication years 2020-2025. Specific keywords were combined with Boolean operators and filters to optimise searches. Duplicate removal, screening of titles and abstracts, as well as full-text evaluation were done using the Rayyan.ai platform. The CASP checklists were used to perform risk of bias assessment. Extracted data were analysed using a narrative synthesis approach.

Results or Findings: Out of 87120 initial sources of evidence, 18 articles were finally included in this systematic review. AI was identified as an important factor in reducing energy consumption in radiology, both by minimising equipment idle time and by accelerating MRI acquisition, automating planning, and simplifying workflows. In addition, AI could facilitate low-field imaging or significantly reduce contrast agent dosage, by generating virtually enhanced images, or restoring signal with minimal contrast agent waste. AI could contribute to sustainability in imaging by reducing unnecessary examinations through clinical decision support, optimising workforce and patient scheduling, and assisting image analysis to minimise workstation use which together lower energy consumption and resource use.

Conclusion: This systematic review demonstrates that AI-powered applications hold promise with regard to environmental sustainability in radiology, helping to optimise image acquisition, image analysis, contrast administration, and workflow efficiency. Balance must be struck between energy-intensive AI usage and AI-enhanced energy saving, while any costs and energy demands associated with training AI models and data storage should be always considered.

Limitations: n/a

Funding for this study: n/a

Has your study been approved by an ethics committee? Not applicable

Ethics committee - additional information:

Purpose: To explore the potential role of AI-powered applications in enhancing environmental sustainability in radiology.

Methods or Background: Searches were performed across eight different electronic bibliographic databases: Google Scholar, PubMed, Wiley Online library, Embase, Web of Science platform, the Cochrane library, CINAHL Ultimate, and the MedRxiv database for preprint work, for the publication years 2020-2025. Specific keywords were combined with Boolean operators and filters to optimise searches. Duplicate removal, screening of titles and abstracts, as well as full-text evaluation were done using the Rayyan.ai platform. The CASP checklists were used to perform risk of bias assessment. Extracted data were analysed using a narrative synthesis approach.

Results or Findings: Out of 87120 initial sources of evidence, 18 articles were finally included in this systematic review. AI was identified as an important factor in reducing energy consumption in radiology, both by minimising equipment idle time and by accelerating MRI acquisition, automating planning, and simplifying workflows. In addition, AI could facilitate low-field imaging or significantly reduce contrast agent dosage, by generating virtually enhanced images, or restoring signal with minimal contrast agent waste. AI could contribute to sustainability in imaging by reducing unnecessary examinations through clinical decision support, optimising workforce and patient scheduling, and assisting image analysis to minimise workstation use which together lower energy consumption and resource use.

Conclusion: This systematic review demonstrates that AI-powered applications hold promise with regard to environmental sustainability in radiology, helping to optimise image acquisition, image analysis, contrast administration, and workflow efficiency. Balance must be struck between energy-intensive AI usage and AI-enhanced energy saving, while any costs and energy demands associated with training AI models and data storage should be always considered.

Limitations: n/a

Funding for this study: n/a

Has your study been approved by an ethics committee? Not applicable

Ethics committee - additional information:

6 min

AI-Based Cardiac MRI for Tissue Characterization in Acute Myocardial Infarction: a reproducibility study

Valentina Tambè, Milan / Italy

Author Block: V. Tambè, D. Capra, B. Loscocco, C. Casamassima, M. Memmedova, F. Secchi; Milan/IT

Purpose: This study investigated the impact of AI-based image denoising and super-resolution in cardiac magnetic resonance (CMR) imaging in patients with acute myocardial infarction who underwent coronary revascularization.

Methods or Background: Myocardial mass, edema, fibrosis and no-reflow were segmented by an expert reader using a semi-automatic software, in both native and AI-enhanced images. The analysis focused on Short Tau Inversion Recovery (STIR) and Late Gadolinium Enhancement (LGE) sequences.

Results or Findings: Twenty-seven patients were included, with no-reflow present in 13. AI-derived measurements demonstrated good agreement with native imaging. For edema percentage, the bias was 3% with limits of agreement (LoA) from –24.3% to +30.4%; for LGE percentage, the bias was 1% with LoA from –17.6% to +19.6%. AI reconstructions tended to underestimate myocardial mass: in T1 sequences, the bias was –9.1 g (LoA –26.2 to +8.0 g), and in T2 sequences, –5.3 g (LoA –49.9 to +39.3 g). Similarly, no-reflow mass and volume were underestimated, with biases of –6.5 g (LoA –16.4 to +3.8) and –6.2 ml (LoA –15.6 to +3.3), respectively. In both native and AI-enhanced datasets, significant negative correlations were observed between LVEF and LGE% (native: ρ = –0.616, p<0.001; AI: ρ = –0.551, p=0.003) as well as between LVEF and no-reflow mass (native: ρ = –0.652, p=0.016; AI ρ = –0.57, p = 0.04), whereas edema showed a moderate negative correlation (native: ρ = –0.501, p=0.008; AI: ρ = –0.402, p=0.038).

Conclusion: A negligible bias was observed between native and AI-derived measurements. Although the limits of agreement were wide, AI-enhanced CMR consistently correlated with systolic function. These findings support the use of AI-based denoising and super-resolution in CMR without significantly affecting myocardial tissue quantification.

Limitations: The limited sample size may have affected the limits of agreement.

Funding for this study: None

Has your study been approved by an ethics committee? Yes

Ethics committee - additional information: IRCCS MULTIMEDICA - ethics committee

Purpose: This study investigated the impact of AI-based image denoising and super-resolution in cardiac magnetic resonance (CMR) imaging in patients with acute myocardial infarction who underwent coronary revascularization.

Methods or Background: Myocardial mass, edema, fibrosis and no-reflow were segmented by an expert reader using a semi-automatic software, in both native and AI-enhanced images. The analysis focused on Short Tau Inversion Recovery (STIR) and Late Gadolinium Enhancement (LGE) sequences.

Results or Findings: Twenty-seven patients were included, with no-reflow present in 13. AI-derived measurements demonstrated good agreement with native imaging. For edema percentage, the bias was 3% with limits of agreement (LoA) from –24.3% to +30.4%; for LGE percentage, the bias was 1% with LoA from –17.6% to +19.6%. AI reconstructions tended to underestimate myocardial mass: in T1 sequences, the bias was –9.1 g (LoA –26.2 to +8.0 g), and in T2 sequences, –5.3 g (LoA –49.9 to +39.3 g). Similarly, no-reflow mass and volume were underestimated, with biases of –6.5 g (LoA –16.4 to +3.8) and –6.2 ml (LoA –15.6 to +3.3), respectively. In both native and AI-enhanced datasets, significant negative correlations were observed between LVEF and LGE% (native: ρ = –0.616, p<0.001; AI: ρ = –0.551, p=0.003) as well as between LVEF and no-reflow mass (native: ρ = –0.652, p=0.016; AI ρ = –0.57, p = 0.04), whereas edema showed a moderate negative correlation (native: ρ = –0.501, p=0.008; AI: ρ = –0.402, p=0.038).

Conclusion: A negligible bias was observed between native and AI-derived measurements. Although the limits of agreement were wide, AI-enhanced CMR consistently correlated with systolic function. These findings support the use of AI-based denoising and super-resolution in CMR without significantly affecting myocardial tissue quantification.

Limitations: The limited sample size may have affected the limits of agreement.

Funding for this study: None

Has your study been approved by an ethics committee? Yes

Ethics committee - additional information: IRCCS MULTIMEDICA - ethics committee

6 min

AI-Assisted Onboarding for Radiographers: A Qualitative Evaluation of the Pair Chat Assistant

Celine Tan, Singapore / Singapore

Author Block: C. Tan, L. A. J. Chong; Singapore/SG

Purpose: Onboarding for newly recruited radiographers often relies on didactic lectures and online learning to introduce protocols and practice. While effective, this limits engagement, flexibility, and self-directed learning. Pair Chat is an in-house AI large language model platform that enables staff to build personalised AI bots. A customised bot was created within the Radiography Department to provide interactive, on-demand support for new radiographers during onboarding. This study evaluated the perceived effectiveness of AI-assisted onboarding using the Pair Chat assistant compared with the conventional methods of learning.

Methods or Background: A qualitative exploratory design was adopted. Eight radiographers who had used the Pair Chat assistant for three months participated in a focus group discussion. Data were transcribed verbatim and analysed through a hybrid content–thematic approach. Domains explored included learning effectiveness, confidence, engagement, efficiency, and improvement suggestions.

Results or Findings: Three themes emerged. First, accessibility barriers: reliance on work desktops limited spontaneous use, highlighting the need for mobile access. Second, a trust hierarchy: although valued for rapid, non-judgemental clarification, participants continued to prioritise colleagues and institutional references. This conditional trust reinforced the perception of the AI as a supplementary rather than replacement tool. Third, complementarity: while the assistant offered detailed, searchable content, the absence of graphics and experiential depth reduced its utility compared with lectures.

Conclusion: AI-assisted onboarding can enhance flexibility and learner autonomy, but its effectiveness depends on accessibility and integration with mentorship. A blended model may provide the most sustainable approach.

Limitations: This pilot study is limited by its small sample size, single-site context, and short evaluation period. Findings relied on self-reported perceptions, with potential bias from participant self-selection and group dynamics, and may not be generalisable. Results should therefore be interpreted as preliminary insights to inform larger-scale studies.

Funding for this study: Not Applicable.

Has your study been approved by an ethics committee? Not applicable

Ethics committee - additional information:

Purpose: Onboarding for newly recruited radiographers often relies on didactic lectures and online learning to introduce protocols and practice. While effective, this limits engagement, flexibility, and self-directed learning. Pair Chat is an in-house AI large language model platform that enables staff to build personalised AI bots. A customised bot was created within the Radiography Department to provide interactive, on-demand support for new radiographers during onboarding. This study evaluated the perceived effectiveness of AI-assisted onboarding using the Pair Chat assistant compared with the conventional methods of learning.

Methods or Background: A qualitative exploratory design was adopted. Eight radiographers who had used the Pair Chat assistant for three months participated in a focus group discussion. Data were transcribed verbatim and analysed through a hybrid content–thematic approach. Domains explored included learning effectiveness, confidence, engagement, efficiency, and improvement suggestions.

Results or Findings: Three themes emerged. First, accessibility barriers: reliance on work desktops limited spontaneous use, highlighting the need for mobile access. Second, a trust hierarchy: although valued for rapid, non-judgemental clarification, participants continued to prioritise colleagues and institutional references. This conditional trust reinforced the perception of the AI as a supplementary rather than replacement tool. Third, complementarity: while the assistant offered detailed, searchable content, the absence of graphics and experiential depth reduced its utility compared with lectures.

Conclusion: AI-assisted onboarding can enhance flexibility and learner autonomy, but its effectiveness depends on accessibility and integration with mentorship. A blended model may provide the most sustainable approach.

Limitations: This pilot study is limited by its small sample size, single-site context, and short evaluation period. Findings relied on self-reported perceptions, with potential bias from participant self-selection and group dynamics, and may not be generalisable. Results should therefore be interpreted as preliminary insights to inform larger-scale studies.

Funding for this study: Not Applicable.

Has your study been approved by an ethics committee? Not applicable

Ethics committee - additional information:

6 min

AI failures during AI implementation into clinical practice

Nikolaos Stogiannos, Corfu / Greece

Author Block: N. Stogiannos1, R. Cuocolo2, A. D'Antonoli3, D. Pinto Dos Santos4, H. Harvey1, M. Huisman5, B. Koçak6, M. Klontzas7, C. Malamateniou1; 1London/UK, 2Naples/IT, 3Basel/CH, 4Mainz/DE, 5Nijmegen/NL, 6Instanbul/TR, 7Heraklion/GR

Purpose: To map out different potential causes of AI failures, that could potentially impede AI implementation, lead to poor patient outcomes, increase financial costs, and add burden to clinical workflows. Potential solutions to mitigate these errors are also presented.

Methods or Background: A diverse group of AI experts in medical imaging, including radiologists, radiographers, computer scientists, and technical physicians, hand-searched all available literature to identify studies related to AI failures in radiology, as well as potential solutions. All eligible papers were then analysed by three group members and assigned to specific categories.

Results or Findings: Three distinct categories of AI failures were identified: a) errors related to AI models (algorithmic bias, lack of diverse and inclusive datasets, poor internal/external testing, failures due to unseen real-world data, suboptimal post-market surveillance, and data safety during AI model decommissioning), b) infrastructure-related failures (hardware/software issues, poor integration into PACS/RIS, network deficiencies), and c) human factors (human-AI interaction, automation bias, resistance to change, publication mishaps, annotation/interpretation errors, ergonomics). Adequate AI training and literacy, continuous monitoring of AI tools, standardized reporting of AI studies, multidisciplinary collaboration, effective leadership, and funding were suggested as potential solutions to the above failures.

Conclusion: AI models can fail at any stage through their lifecycle. Infrastructure issues and human related factors may present an important cause of AI failures.

Limitations: This is not an exhaustive list of AI failures in radiology as it is challenging to identify AI failures in a culture that mostly celebrates success. Also, identification is challenging due to publication bias and the evolving nature of the field.

Funding for this study: N/A

Has your study been approved by an ethics committee? Not applicable

Ethics committee - additional information:

Purpose: To map out different potential causes of AI failures, that could potentially impede AI implementation, lead to poor patient outcomes, increase financial costs, and add burden to clinical workflows. Potential solutions to mitigate these errors are also presented.

Methods or Background: A diverse group of AI experts in medical imaging, including radiologists, radiographers, computer scientists, and technical physicians, hand-searched all available literature to identify studies related to AI failures in radiology, as well as potential solutions. All eligible papers were then analysed by three group members and assigned to specific categories.

Results or Findings: Three distinct categories of AI failures were identified: a) errors related to AI models (algorithmic bias, lack of diverse and inclusive datasets, poor internal/external testing, failures due to unseen real-world data, suboptimal post-market surveillance, and data safety during AI model decommissioning), b) infrastructure-related failures (hardware/software issues, poor integration into PACS/RIS, network deficiencies), and c) human factors (human-AI interaction, automation bias, resistance to change, publication mishaps, annotation/interpretation errors, ergonomics). Adequate AI training and literacy, continuous monitoring of AI tools, standardized reporting of AI studies, multidisciplinary collaboration, effective leadership, and funding were suggested as potential solutions to the above failures.

Conclusion: AI models can fail at any stage through their lifecycle. Infrastructure issues and human related factors may present an important cause of AI failures.

Limitations: This is not an exhaustive list of AI failures in radiology as it is challenging to identify AI failures in a culture that mostly celebrates success. Also, identification is challenging due to publication bias and the evolving nature of the field.

Funding for this study: N/A

Has your study been approved by an ethics committee? Not applicable

Ethics committee - additional information:

6 min

Collaborative development of an ML-based CT protocol recommender

Benoît Dufour, Sion / Switzerland

Author Block: A. Li1, B. Dufour2, S. Morozov2, N. Heracleous2, B. Rizk2, C. Thouly2; 1Milwaukee/US, 2Sion/CH

Purpose: To collaboratively develop and evaluate a lightweight, data-driven machine learning model for recommending CT Body protocols, and to analyze the effect of procedure-based filtering on performance under data-scarce conditions.

Methods or Background: Efficient CT protocol selection often requires synthesis of multiple data sources and coordination among staff, creating variability and delay; AI-augmented decision support may streamline this step in the radiology workflow. A multicenter French‑language dataset of 42,286 adult CT Body studies across 27 protocols was assembled; to emulate scarcity, training was limited to 5,000 studies with stratified partitioning of the remainder into validation (8%) and test (20%). Inputs were imaging order and free‑text clinical indication; features were derived via a statistical text extraction and used as inputs to a decision tree-based model, XGBoost. Given the class imbalance, micro F1 was the primary metric.

Results or Findings: Procedure–protocol analysis showed certain procedures mapped to multiple protocols, mirroring local workflow. Baseline model (all procedures) achieved micro F1 58%. After filtering one high‑volume procedure (43% of exams), micro F1 increased to 67%. Aggressive filtering (two procedures totaling 60% of exams) reached micro F1 80%. In the baseline model, high‑confidence predictions (≥0.80 probability) covered 33% of the test set with 91% accuracy, predominantly for well‑defined protocols (e.g., spine). Confusion analysis indicated strong performance in 18/27 protocols, with most errors among protocols with overlapping anatomic coverage.

Conclusion: A simple decision tree–based model, coupled with procedure filtering, achieved the target 80% micro F1 on a CT Body dataset under constrained training size, suggesting AI‑augmented protocoling can reliably handle a substantial subset of studies while flagging ambiguous cases for expert review.

Limitations: Generalizability to other sites, languages, and clinical domains requires validation; comparisons with macro‑weighted metrics may further characterize class‑specific behavior.

Funding for this study: Research grant from GEHC

Has your study been approved by an ethics committee? Yes

Ethics committee - additional information: Approved by CER-VD

Purpose: To collaboratively develop and evaluate a lightweight, data-driven machine learning model for recommending CT Body protocols, and to analyze the effect of procedure-based filtering on performance under data-scarce conditions.

Methods or Background: Efficient CT protocol selection often requires synthesis of multiple data sources and coordination among staff, creating variability and delay; AI-augmented decision support may streamline this step in the radiology workflow. A multicenter French‑language dataset of 42,286 adult CT Body studies across 27 protocols was assembled; to emulate scarcity, training was limited to 5,000 studies with stratified partitioning of the remainder into validation (8%) and test (20%). Inputs were imaging order and free‑text clinical indication; features were derived via a statistical text extraction and used as inputs to a decision tree-based model, XGBoost. Given the class imbalance, micro F1 was the primary metric.

Results or Findings: Procedure–protocol analysis showed certain procedures mapped to multiple protocols, mirroring local workflow. Baseline model (all procedures) achieved micro F1 58%. After filtering one high‑volume procedure (43% of exams), micro F1 increased to 67%. Aggressive filtering (two procedures totaling 60% of exams) reached micro F1 80%. In the baseline model, high‑confidence predictions (≥0.80 probability) covered 33% of the test set with 91% accuracy, predominantly for well‑defined protocols (e.g., spine). Confusion analysis indicated strong performance in 18/27 protocols, with most errors among protocols with overlapping anatomic coverage.

Conclusion: A simple decision tree–based model, coupled with procedure filtering, achieved the target 80% micro F1 on a CT Body dataset under constrained training size, suggesting AI‑augmented protocoling can reliably handle a substantial subset of studies while flagging ambiguous cases for expert review.

Limitations: Generalizability to other sites, languages, and clinical domains requires validation; comparisons with macro‑weighted metrics may further characterize class‑specific behavior.

Funding for this study: Research grant from GEHC

Has your study been approved by an ethics committee? Yes

Ethics committee - additional information: Approved by CER-VD

Discussion